Consent Is Not Privacy: Why We Need to Rethink Data Protection

Consent has become the default answer to data privacy. Most modern privacy laws and data-sharing frameworks are built around this idea. But consent alone does not equal privacy. It is broken, incomplete, and often misused.

Written by

Kush Kanwar

Insights

Feb 16, 2026

SHARE

Consent has become the default answer to data privacy. Click a box, sign a form, and your data is supposedly protected. Most modern privacy laws and data-sharing frameworks are built around this idea. GDPR, India’s DPDP Act, and ecosystems like Account Aggregator (AA) and emerging rails like ULI all lean on consented sharing as the primary safeguard. But consent alone does not equal privacy. It is broken, incomplete, and often misused.

The two‑fold challenge: consent fails at the start and at the end

Consent is supposed to do two jobs. It should help people understand what they are agreeing to before sharing data. It should also act as a control surface for how that data is used after sharing.

In reality, both edges are weak. First, users often operate under an illusion of understanding. Second, once data leaves their control, there is almost no way to verify or limit how it is processed. Consent becomes a one‑time checkbox rather than a living control system.

The next sections unpack this two‑fold challenge.

The consent illusion: awareness without real understanding

Users are rarely aware of what they are consenting to. When they click "I agree," they are accepting terms buried in dense legal language. A 2023 PwC survey found that only 9% of organizations sought consent that was truly free, specific, and informed. Only 2% provided multilingual consent.

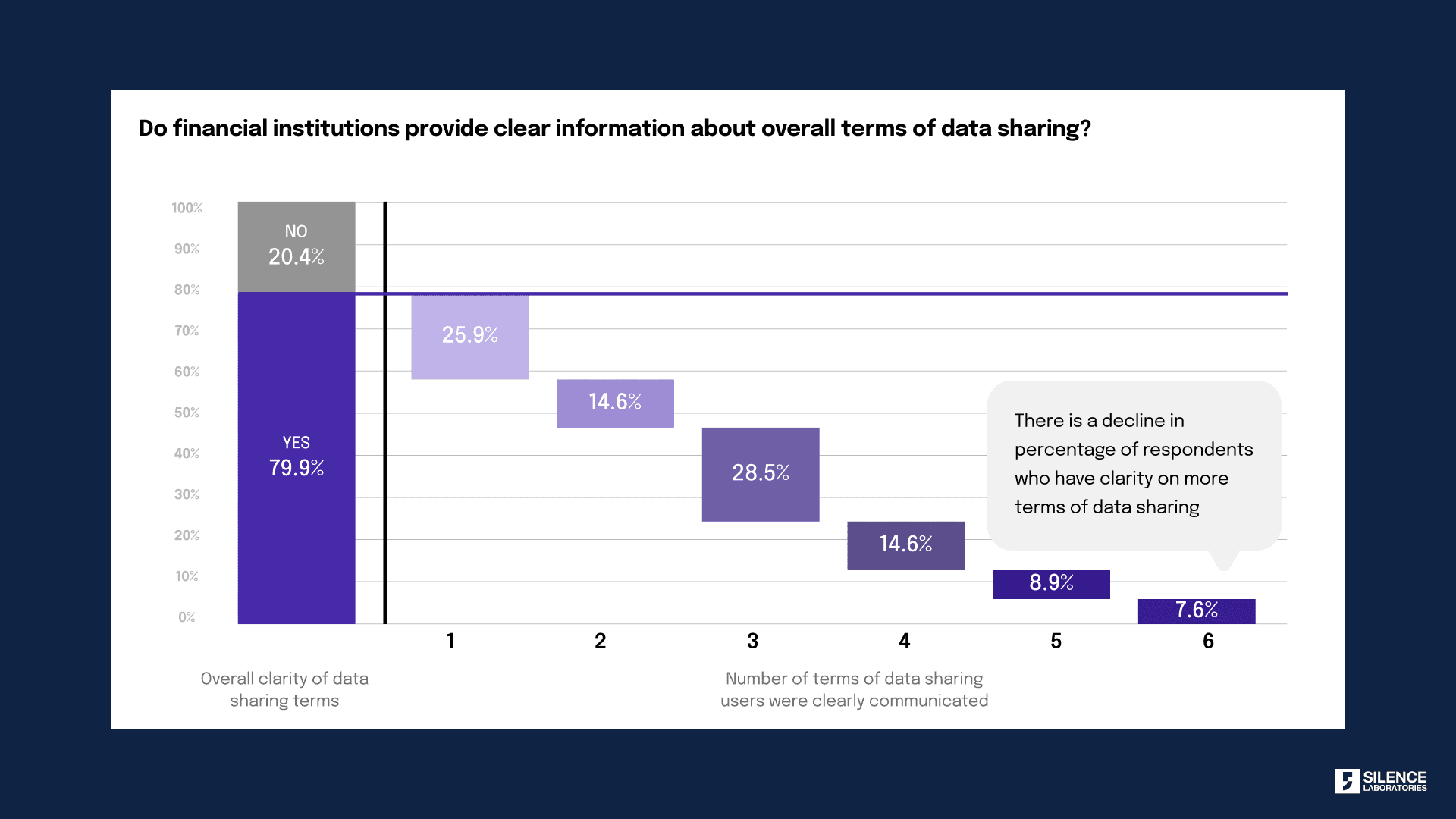

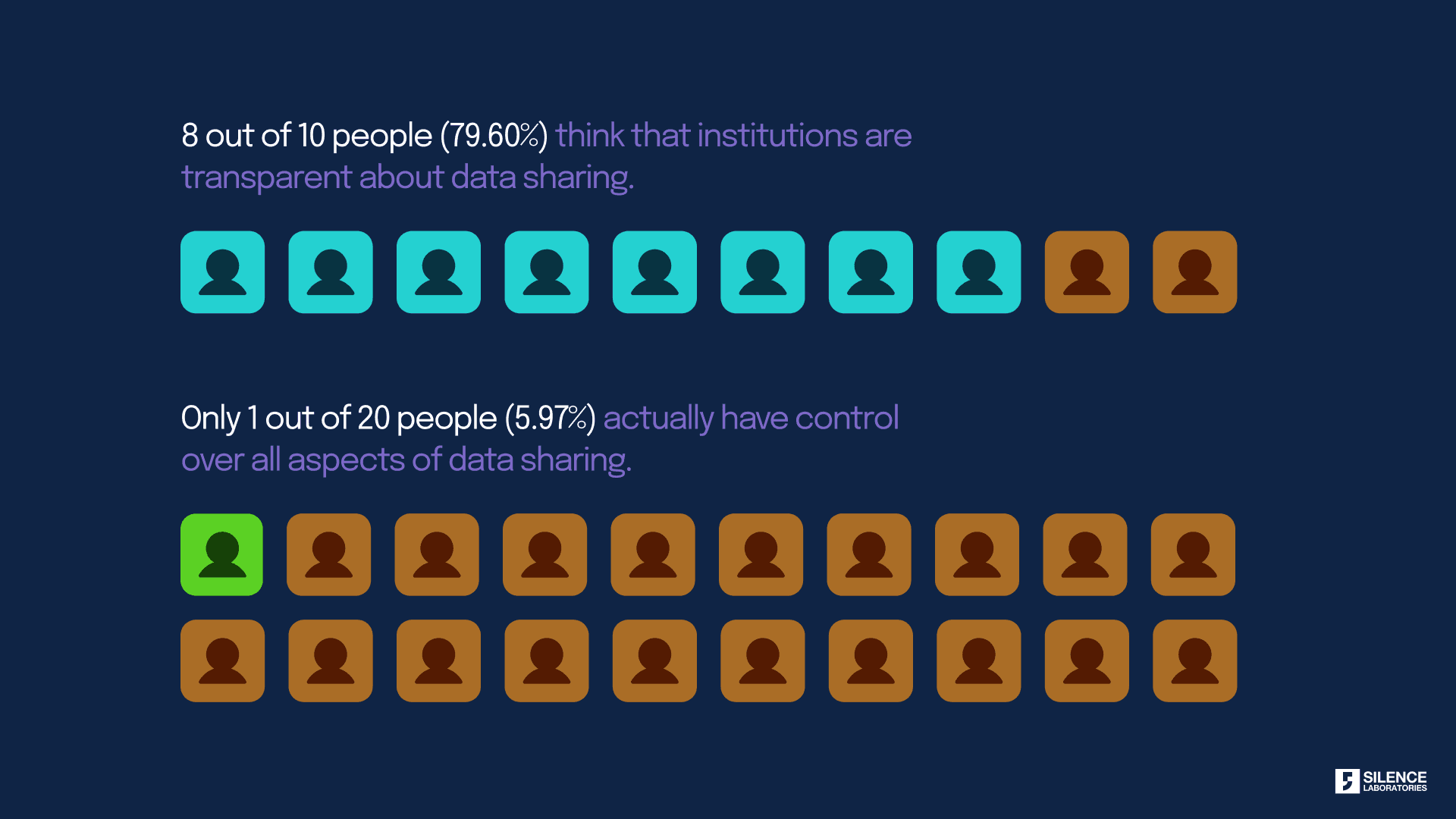

Even when users believe they understand, the reality looks different. A Silence Laboratories survey revealed that while 80% of customers believed they had a clear understanding of data-sharing terms, their clarity dropped sharply when confronted with specific details. Many struggled to answer basic questions about consent revocation, data retention, and who exactly could access their data. Only 1 in 20 people reported having control over all aspects of data sharing.

This is the consent illusion. Interfaces give a feeling of control without delivering real comprehension or usable levers. The friction is low. The consequences are high. Users move ahead because they want the service, not because they have internalised what will happen to their data.

Even if users are fully aware of what they are consenting to, consent is simply a tool to ensure correct sharing of data. It does not control what happens next. Once data leaves the user's control, how that data is used becomes a black box.

Examples: what the consent illusion looks like in practice

The consent illusion shows up in very ordinary financial journeys.

Bundled purposes in a single checkbox

A loan or wealth‑management app presents one generic checkbox that covers onboarding, risk profiling, cross‑selling, analytics, and third‑party sharing. The user sees a single “Agree and continue” button. The interface does not separate what is strictly necessary for the service from what is optional, such as marketing or data monetisation.Fine print that hides meaningful choices

A bank’s online account opening flow uses clear, friendly screens for KYC and product selection. The real conditions for data reuse and onward sharing sit inside a long privacy policy linked in small text. Revocation rights, retention limits, and data‑sharing with group entities or partners are technically disclosed, but in a format that almost nobody reads or understands in context.All‑or‑nothing “consent walls”

A credit‑scoring or personal finance app asks for broad access to SMS, contacts, and location. The choice is accept everything or abandon the service. There is no granular toggle for “share only what is needed for this feature”. Users who need the service have no realistic alternative, so they consent under pressure.

In each case, the user interface signals that consent has been captured correctly. From the user’s perspective, they did the “right” thing. From a privacy perspective, their control is shallow. The legal record of consent exists. The practical ability to shape or withdraw that consent does not.

The Indian Reality: Consent does not prevent misuse

India has seen alarming examples where consent mechanisms failed to protect users

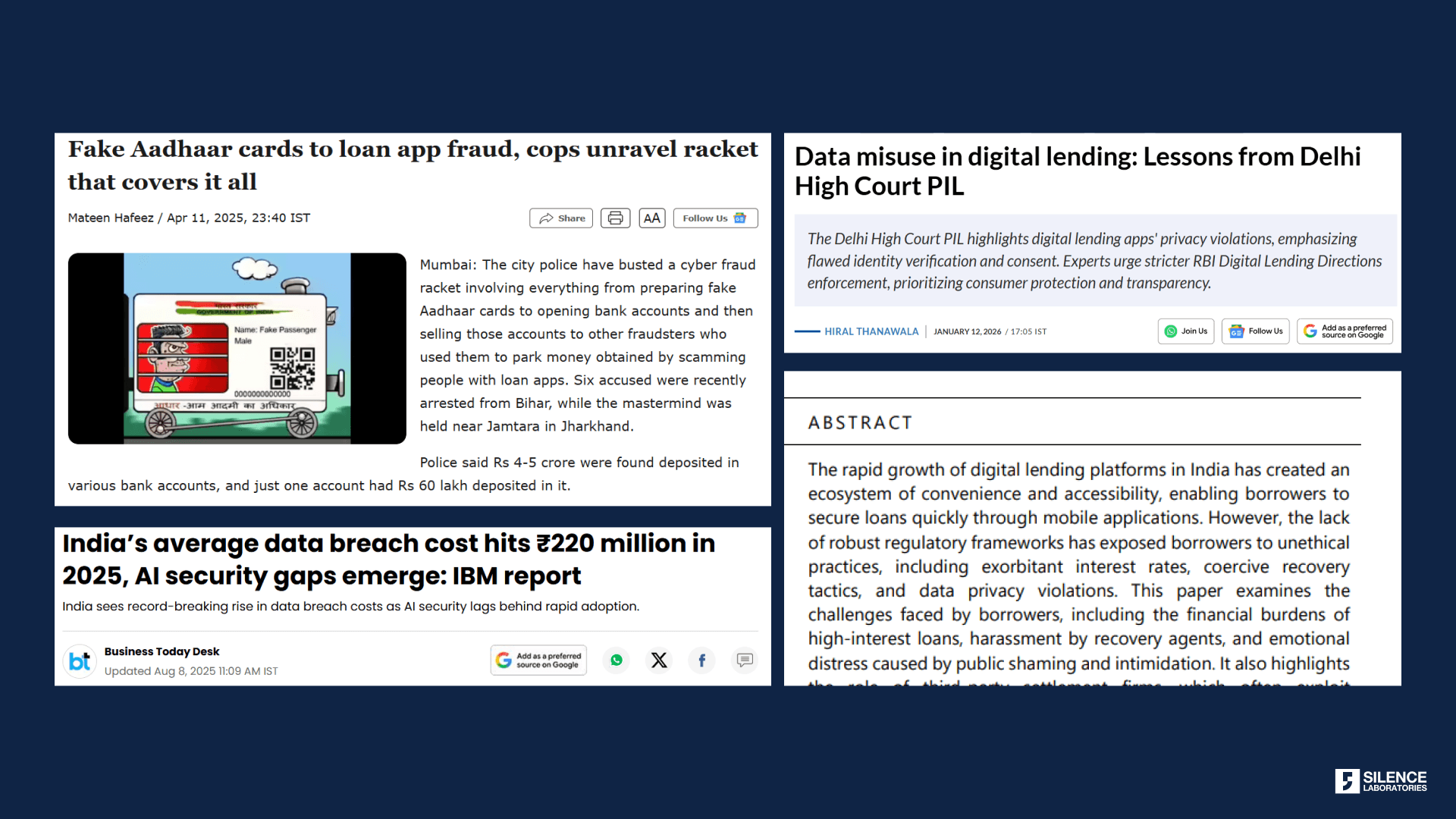

Digital Lending Apps (2025-2026): A Delhi High Court PIL revealed that digital lending platforms accessed sensitive data like contact lists, call logs, and location information without necessity. Users consented to loan processing, but data was used for harassment and breaches of constitutional rights. The petition emphasized how flawed identity verification and coercive consent practices turned consent into a mere formality.

Aadhaar Misuse for Loans (2025): Unethical lending platforms used Aadhaar data for unauthorized loan applications, even with "apparent consent" from users. This violated the Aadhaar Act and the fundamental right to privacy under Article 21 of the Constitution. Users gave consent without understanding how their biometric data would be used or stored.

Shadow AI and Unauthorized Data Processing (2025): IBM's 2025 Cost of a Data Breach Report found Shadow AI added ₹17.9 million to breach costs on average in India. Shadow AI refers to unauthorized AI usage without IT oversight. User data was processed by AI systems without clear consent or transparency about how algorithms were using their information.

As Rahul Matthan, Partner at Trilegal and head of their Technology, Media and Telecom practice, notes in his article "Tech Solutions for Use Limitation", “Once an organization receives data, there is nothing that prevents them from using it for purposes other than what was consented for.”

Data Breaches: Penalties cannot undo damage

India's DPDP Rules 2025, which operationalize the Digital Personal Data Protection Act 2023, impose significant penalties. Breaches can result in fines up to ₹250 crore for personal data breaches, ₹200 crore for failure to notify breaches, and ₹150 crore for violations by Significant Data Fiduciaries.

But penalties cannot undo damage. Once data is breached, the harm is done. User trust is eroded. Sensitive information is exposed. Unlike passwords or credit cards, identity data cannot be reset. You cannot change your biometric fingerprints. You cannot get a new face for facial recognition. You cannot be issued a new date of birth. Once National ID details, or biometric data are compromised, they remain vulnerable for life.

Financial compensation does not restore privacy.

The Real Solution: Enforcing consent terms in data usage

The paradigm needs to shift. Consent exists, but it cannot be enforced once data leaves the user's control. The real fix is to bind consent to computation cryptographically, making it mathematically impossible to process data beyond agreed terms.

This is where Privacy Enhancing Technologies (PETs) come in. At Silence Laboratories, we build technology that ties consent directly to what can be computed.

Binding consent to compute

The solution has two parts:

Consent for inferences, not raw data. Users agree to share specific insights (credit score, fraud risk, income stability), not their full financial history. The system never moves raw data. It moves only the consented inference.

Computation bound to consent. Every operation on user data is cryptographically verified against the consent artifact. The system checks: "Is this computation permitted?" If not, it does not execute. Purpose limitation becomes architectural, not aspirational.

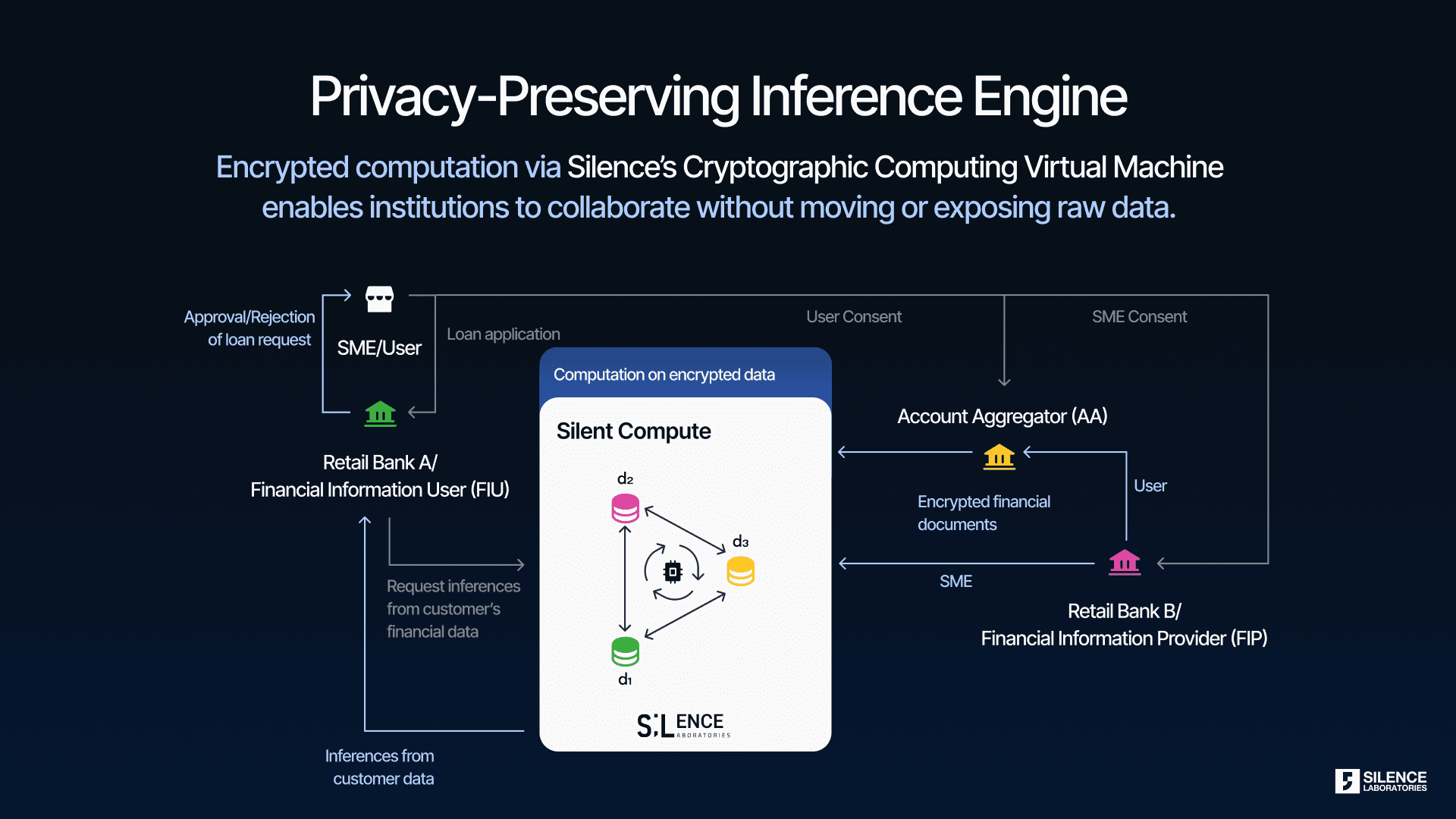

How Multi-Party Computation enforces consent

Multi-Party Computation (MPC) enables computation on encrypted data across multiple parties without any single party seeing the raw data. Here's how it works:

Data stays distributed: Sensitive data is split into encrypted shares across legally isolated computing nodes. No single entity holds the complete data.

Computation without exposure: Insights are extracted from encrypted data. Raw data never needs to be decrypted or centralized.

Consent bound to compute: Every computation is tied to the specific consent provided by the user. The system cryptographically verifies that only permitted operations are executed.

Purpose limitation by code: Unlike traditional systems where purpose limitation is a policy promise, MPC enforces it mathematically. It becomes impossible to use data beyond what the user consented to.

Mathematically impossible to misuse

This is true privacy. Not relying on trust, policies, or promises. The architecture makes it mathematically impossible to access or use data beyond the consented scope.

As demonstrated in our work with Proxtera on cross-border MSME underwriting, lenders in Singapore can validate creditworthiness of businesses in Ghana without raw data ever leaving Ghana's borders. We deployed Financial Information Compute Units (FICUs) using MPC, running credit checks on secret-shared data. No raw data moves. Inferences do. Consent is cryptographically enforced, not just legally promised.

This model won the G20 TechSprint 2025 for Trust and Integrity in Scalable and Open Finance.

Purpose limitation and data minimization without compromising value

Consent frameworks promise two things: data used only for agreed purposes (purpose limitation) and only necessary data collected (data minimization). Traditional systems rely on contracts. Our approach enforces them cryptographically.

Purpose limitation by code

Every computation is tied to user consent. Users consent to specific inferences (credit score, fraud risk), not blanket data access. Processors submit purpose-bound operational codes that are verified against consent before execution. If the operation is not permitted, it does not run. Purpose limitation shifts from a legal promise to an architectural guarantee.

Data minimization without losing utility

Traditional data minimization forces a tradeoff: collect less, learn less. Our model breaks that. Raw data never moves. It stays encrypted and distributed. Only inferences are extracted. A lender gets a credit score, not full transaction history. No duplication, no breach surface.

Unlocking more value

Privacy restrictions usually limit what data can be used. This approach unlocks datasets that were too sensitive to share. Banks can participate without losing control. Processors tap richer datasets (medical records, cross-border financial data) for inference without privacy risk. More data improves analytics quality and enables new business cases.

This creates a marketplace for verified functions with usage-based revenue sharing. Privacy becomes an enabler, not a constraint.

The Path Forward

Consent is necessary but not sufficient. Privacy requires architectural change. Computation must be bound to consent terms through cryptography, not just contracts.

At Silence Laboratories, we are building that future. One where privacy is provable, not promised. Where consent means control, not just a checkbox.

Read Rahul Matthan’s article to learn more about consent: Tech Solutions for Use Limitation by Rahul Matthan

Learn about our work towards incorporating consent and privacy: Open Finance Revisited: Strengthening Data Governance with Cryptographic Privacy and Auditability